AI-Driven Interviews are Helping Push HR Leaders Further Down the Path Toward Safe AI Adoption at Work

Key Points

As AI tools move from résumé polishing into live interviews, hiring is shifting from a workflow efficiency issue to a broader integrity and security risk.

Amy Casciotti, Vice President of Human Resources at TechSmith, says real time AI prompting, spoofing tactics, and identity manipulation are redefining recruitment as a verification challenge.

She argues that as automation grows more sophisticated, companies that reinvest in face to face evaluation and live skills validation will be better positioned to protect hiring quality and organizational trust.

AI can be helpful, but authenticity still matters. Whether it’s on the employer side or the candidate side, we still need to figure out who the authentic people are in this hiring process.

Amy Casciotti

Vice President, Human Resources

TechSmith

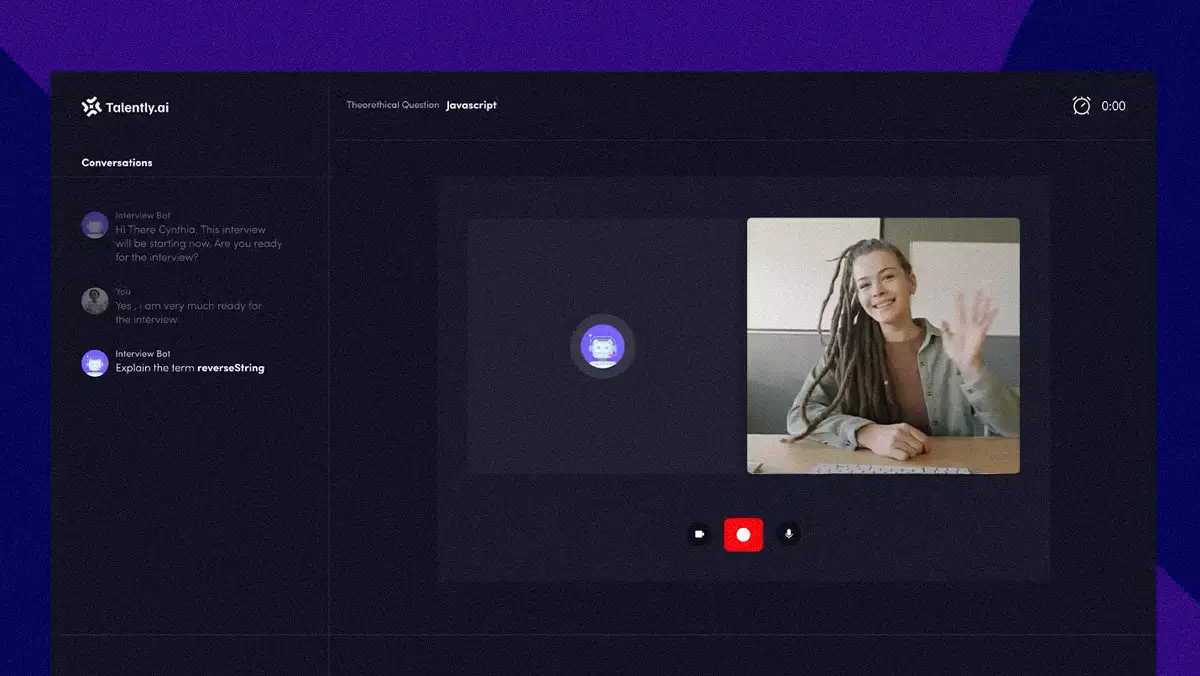

AI’s growing role in hiring is pushing companies back to the basics. What began as a surge of AI-polished résumés has quickly escalated into more serious forms of deception. Hiring managers are now dealing with candidates using real-time AI during interviews, along with increasingly sophisticated impersonation and identity fraud. To stay ahead, hiring teams are now developing new strategies to verify candidate identity and assess skills.

Working through this evolution is Amy Casciotti, Vice President of Human Resources at TechSmith and a member of the Forbes Human Resources Council. As a leader at a company that builds AI into its own products, Casciotti has guided the HR department through periods of rapid company growth. Her background provides a clear perspective on the distinction between authentic and artificial candidates, prompting a strategy built around verifying real people and real skills.

“AI can be helpful, but authenticity still matters. Whether it’s on the employer side or the candidate side, we still need to figure out who the authentic people are in this hiring process,” Casciotti explains. From her view, the turning point came when what seemed like a workflow problem (a surge of AI-polished applications) became a question of integrity. The issue moved from résumé inflation to active deception during interviews, a shift that is redefining hiring as a security challenge.

Dialing for deception: Casciotti points to “spoofing,” a practice where candidates in other countries use VPNs or pay U.S.-based stand-ins to fake their identity and location. In some cases, companies have ended up hiring people who don’t actually exist. The issue has also been tied to state-backed efforts, including North Korea’s remote work scam. Federal agencies have responded with warnings, coordinated enforcement actions, and alerts about the growing threat to U.S. businesses. “We’ve seen people using AI during live interviews, having it listen to the question and then reading back what it spits out. That’s why authenticity really does matter and why we’re bringing final stage candidates in person,” Casciotti explains.

To address these evolving risks, TechSmith is returning to basics. Casciotti says the company is training interviewers to recognize subtle signs of inauthenticity after discovering that AI can easily clear traditional screening hurdles. It has also renewed its emphasis on college recruiting and in-person engagement, while adding verification steps such as live pair programming to ensure candidates can demonstrate real-world skills.

A little too perfect: Because humans think as they speak, dialogue has natural hesitations and self-corrections. When relying on AI assistance, Casciotti says candidates sound unnervingly polished. Their responses flow perfectly, without the usual pauses that signal someone is recalling information on the spot. “The first clue is when candidates don’t have normal speech patterns,” she observes. “They talk like they’re reading from a textbook. Is every answer the perfect answer? AI doesn’t tend to have mistakes in it, so as interviewers, we need to be more tuned into that.”

Polish vs. impersonation: For Casciotti, the line between acceptable assistance and outright deception is sharp and practical. She likens AI assistance in job applications to a headhunter refining a candidate’s profile, an acceptable form of support. The line, she argues, is crossed when enhancement turns into misrepresentation. “If you’re using AI to say you can do something you can’t, you’re going to get found out. That becomes an integrity issue, and that’s where the balance is between augmentation and honesty.”

For Casciotti, the path forward starts with first principles. She sees face-to-face hiring as a prudent investment in long-term stability, especially after internal analysis showed AI detection software to be financially impractical. The finding led TechSmith to double down on its human-centric processes, betting that success in 2026 will hinge less on pursuing smarter hiring through technology and more on rebuilding trust. “I think it gets back to that face to face still has a place. AI can’t fake that. It can’t replace that. It helps us to see the more authentic person when they’re not hiding behind a computer screen,” she concludes.

Related articles

TL;DR

As AI tools move from résumé polishing into live interviews, hiring is shifting from a workflow efficiency issue to a broader integrity and security risk.

Amy Casciotti, Vice President of Human Resources at TechSmith, says real time AI prompting, spoofing tactics, and identity manipulation are redefining recruitment as a verification challenge.

She argues that as automation grows more sophisticated, companies that reinvest in face to face evaluation and live skills validation will be better positioned to protect hiring quality and organizational trust.

Amy Casciotti

TechSmith

Vice President, Human Resources

Vice President, Human Resources

AI’s growing role in hiring is pushing companies back to the basics. What began as a surge of AI-polished résumés has quickly escalated into more serious forms of deception. Hiring managers are now dealing with candidates using real-time AI during interviews, along with increasingly sophisticated impersonation and identity fraud. To stay ahead, hiring teams are now developing new strategies to verify candidate identity and assess skills.

Working through this evolution is Amy Casciotti, Vice President of Human Resources at TechSmith and a member of the Forbes Human Resources Council. As a leader at a company that builds AI into its own products, Casciotti has guided the HR department through periods of rapid company growth. Her background provides a clear perspective on the distinction between authentic and artificial candidates, prompting a strategy built around verifying real people and real skills.

“AI can be helpful, but authenticity still matters. Whether it’s on the employer side or the candidate side, we still need to figure out who the authentic people are in this hiring process,” Casciotti explains. From her view, the turning point came when what seemed like a workflow problem (a surge of AI-polished applications) became a question of integrity. The issue moved from résumé inflation to active deception during interviews, a shift that is redefining hiring as a security challenge.

Dialing for deception: Casciotti points to “spoofing,” a practice where candidates in other countries use VPNs or pay U.S.-based stand-ins to fake their identity and location. In some cases, companies have ended up hiring people who don’t actually exist. The issue has also been tied to state-backed efforts, including North Korea’s remote work scam. Federal agencies have responded with warnings, coordinated enforcement actions, and alerts about the growing threat to U.S. businesses. “We’ve seen people using AI during live interviews, having it listen to the question and then reading back what it spits out. That’s why authenticity really does matter and why we’re bringing final stage candidates in person,” Casciotti explains.

To address these evolving risks, TechSmith is returning to basics. Casciotti says the company is training interviewers to recognize subtle signs of inauthenticity after discovering that AI can easily clear traditional screening hurdles. It has also renewed its emphasis on college recruiting and in-person engagement, while adding verification steps such as live pair programming to ensure candidates can demonstrate real-world skills.

A little too perfect: Because humans think as they speak, dialogue has natural hesitations and self-corrections. When relying on AI assistance, Casciotti says candidates sound unnervingly polished. Their responses flow perfectly, without the usual pauses that signal someone is recalling information on the spot. “The first clue is when candidates don’t have normal speech patterns,” she observes. “They talk like they’re reading from a textbook. Is every answer the perfect answer? AI doesn’t tend to have mistakes in it, so as interviewers, we need to be more tuned into that.”

Polish vs. impersonation: For Casciotti, the line between acceptable assistance and outright deception is sharp and practical. She likens AI assistance in job applications to a headhunter refining a candidate’s profile, an acceptable form of support. The line, she argues, is crossed when enhancement turns into misrepresentation. “If you’re using AI to say you can do something you can’t, you’re going to get found out. That becomes an integrity issue, and that’s where the balance is between augmentation and honesty.”

For Casciotti, the path forward starts with first principles. She sees face-to-face hiring as a prudent investment in long-term stability, especially after internal analysis showed AI detection software to be financially impractical. The finding led TechSmith to double down on its human-centric processes, betting that success in 2026 will hinge less on pursuing smarter hiring through technology and more on rebuilding trust. “I think it gets back to that face to face still has a place. AI can’t fake that. It can’t replace that. It helps us to see the more authentic person when they’re not hiding behind a computer screen,” she concludes.