How Leaders are Balancing Efficiency and Accountability as AI Enters Core HR Decisions

Key Points

AI is speeding up HR work but still makes mistakes and lacks widespread governance, opening up companies to privacy risks and legal trouble.

Shelley Majors, a Fractional Chief People Officer and founder of Boardwalk Human Resources Consulting, explains that leaders should adopt AI with discipline by championing human oversight.

She advises setting clear AI rules like avoiding using the technology for sensitive tasks like payroll and investigations, and managing AI adoption with transparent communications to employees to maintain trust.

If you want your company to advance, you’re going to have to embrace AI at some point. This means your employees are going to have to embrace AI as well. It’s not going away.

Shelley Majors

Founder

Boardwalk HR Consulting

Artificial intelligence holds enormous potential for human resources, but the technology is still working through its growing pains. It can screen a thousand resumes for executive roles in minutes, yet still requires manual review to catch costly errors. It can combine two job descriptions instantly, only to introduce responsibilities that were never requested. As AI expands across HR functions, the need for human oversight becomes more important, not less. Risks such as hallucinations and data exposure mean that scaling AI across the enterprise requires deeper thinking, stronger safeguards, and more disciplined governance.

Balancing scale and safety is an act that Shelley Majors, a Fractional Chief People Officer with over 27 years of executive HR leadership, knows well. As Founder of Boardwalk Human Resources Consulting and a member of the C-Suite Network’s Thought Council, her work guiding businesses through M&A and organizational growth puts her front and center in the AI adoption discourse. She believes that the path forward requires embracing the technology with discipline, while understanding no tool can replace human wisdom.

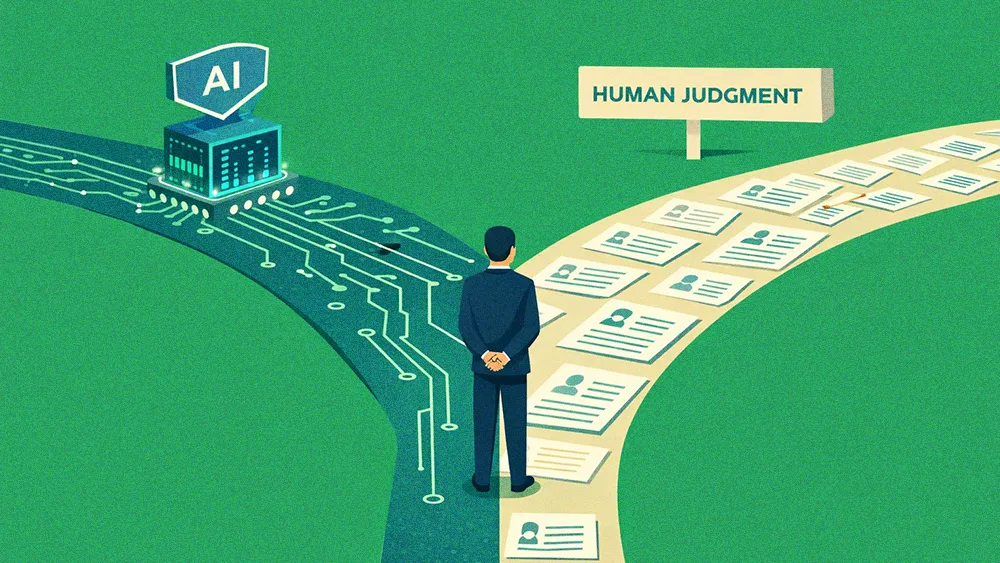

“If you want your company to advance, you’re going to have to embrace AI at some point. This means your employees are going to have to embrace AI as well. It’s not going away,” she says. But adopting AI doesn’t mean giving it full agency. Majors is a pragmatist who supports using AI for simple queries, and chatbots like Ask BambooHR can be a powerful tool for freeing up HR professionals to focus on higher-value work. But her enthusiasm is balanced by a clear-eyed view of the technology’s flaws. This new dynamic is leading many organizations to place a higher premium on human judgment, as the growing legal considerations for HR professionals and AI’s tendency to make mistakes increases the demand for complementary human skills.

Use AI with intention: Majors calls for thoughtful use cases of AI, and steering clear of applying it to sensitive work better done by people. “I’m in support of a chatbot that can answer simple questions, especially if you’re a small team or a team of one. I just don’t see how you can use AI for payroll purposes. That is something you need to have a hands-on person for.”

A costly shortcut: Without proper oversight, the convenience of AI can create clear business risks. She points to a cautionary tale overheard at a recent M&A forum that illustrates the problem of automation without review: “One of the things they were talking about was a lawyer who used ChatGPT to create a legal document that they presented in court, and it was incorrect because they didn’t review the document before presenting it.”

This leap in efficiency raises another organizational question for leaders: When a task that once took an hour now takes five minutes, how can they ensure time is being used productively? The question touches on pressing workplace topics such as performance, ROI, and concerns echoed by academic analysis of AI productivity vs. skill erosion.

The time-value gap: Integrating AI into workplace systems brings up operational complexities for HR professionals around productivity. Majors says it forces them to ask questions like, “What are you doing all day long with your time, since you’ve turned in a document that should have taken you quite some time to do? Is my money being utilized properly with the use of AI?” Clear norms on when AI use is appropriate, how it should be disclosed, and what human review is required can make productivity gains visible and comparable, reducing suspicion about ‘unused’ time.

One of the biggest issues in AI adoption is that “there really is no governance in place,” Majors says bluntly. For HR departments handling sensitive employee information, that absence of formal rules creates both blind spots and liability. She believes the solution starts with clear guardrails and internal policies, a foundational step in any responsible AI in HR strategy.

The data danger zone: Governance becomes especially urgent in cases of misconduct. “If I put ‘John Smith’ and a harassment investigation into a chat tool, where does it go?” Majors asks. “Who has access to it?” One practical mitigation is anonymization. “If you’re going to use AI, then do not use names… ‘Employee A,’ ‘Employee B.'” But even that safeguard has limits. Formal investigations require names, documentation, and defensible decisions. In high-stakes moments, abstraction gives way to accountability and reinforces Majors’ core point that AI can assist, but an accountable human must remain in the loop.

The AI conversation can create friction between employers and leadership. Workers tasked with training AI systems may worry they are automating parts of their own jobs, while HR leaders must weigh efficiency gains against legal exposure, compliance gaps, and the reputational risks of over-automation. In some cases, companies are already confronting costly discrepancies created by relying too heavily on automated outputs without sufficient oversight. Navigating this shift, Majors argues, ultimately comes down to a disciplined change management where leadership builds trust through transparency and fairness. She believes this tension will prompt a recalibration instead of a retreat from AI, resulting in a more measured integration of it into HR workflows.

“You’re going to need to have those employees back to make sure that the information the AI is giving is accurate,” she says. “Without the employees, no one knows if the information is accurate.” For HR leaders, the mandate is balance: keep humans at the core, and let technology simply serve as a support.

Related articles

TL;DR

AI is speeding up HR work but still makes mistakes and lacks widespread governance, opening up companies to privacy risks and legal trouble.

Shelley Majors, a Fractional Chief People Officer and founder of Boardwalk Human Resources Consulting, explains that leaders should adopt AI with discipline by championing human oversight.

She advises setting clear AI rules like avoiding using the technology for sensitive tasks like payroll and investigations, and managing AI adoption with transparent communications to employees to maintain trust.

Shelley Majors

Boardwalk HR Consulting

Founder

Founder

Artificial intelligence holds enormous potential for human resources, but the technology is still working through its growing pains. It can screen a thousand resumes for executive roles in minutes, yet still requires manual review to catch costly errors. It can combine two job descriptions instantly, only to introduce responsibilities that were never requested. As AI expands across HR functions, the need for human oversight becomes more important, not less. Risks such as hallucinations and data exposure mean that scaling AI across the enterprise requires deeper thinking, stronger safeguards, and more disciplined governance.

Balancing scale and safety is an act that Shelley Majors, a Fractional Chief People Officer with over 27 years of executive HR leadership, knows well. As Founder of Boardwalk Human Resources Consulting and a member of the C-Suite Network’s Thought Council, her work guiding businesses through M&A and organizational growth puts her front and center in the AI adoption discourse. She believes that the path forward requires embracing the technology with discipline, while understanding no tool can replace human wisdom.

“If you want your company to advance, you’re going to have to embrace AI at some point. This means your employees are going to have to embrace AI as well. It’s not going away,” she says. But adopting AI doesn’t mean giving it full agency. Majors is a pragmatist who supports using AI for simple queries, and chatbots like Ask BambooHR can be a powerful tool for freeing up HR professionals to focus on higher-value work. But her enthusiasm is balanced by a clear-eyed view of the technology’s flaws. This new dynamic is leading many organizations to place a higher premium on human judgment, as the growing legal considerations for HR professionals and AI’s tendency to make mistakes increases the demand for complementary human skills.

Use AI with intention: Majors calls for thoughtful use cases of AI, and steering clear of applying it to sensitive work better done by people. “I’m in support of a chatbot that can answer simple questions, especially if you’re a small team or a team of one. I just don’t see how you can use AI for payroll purposes. That is something you need to have a hands-on person for.”

A costly shortcut: Without proper oversight, the convenience of AI can create clear business risks. She points to a cautionary tale overheard at a recent M&A forum that illustrates the problem of automation without review: “One of the things they were talking about was a lawyer who used ChatGPT to create a legal document that they presented in court, and it was incorrect because they didn’t review the document before presenting it.”

This leap in efficiency raises another organizational question for leaders: When a task that once took an hour now takes five minutes, how can they ensure time is being used productively? The question touches on pressing workplace topics such as performance, ROI, and concerns echoed by academic analysis of AI productivity vs. skill erosion.

The time-value gap: Integrating AI into workplace systems brings up operational complexities for HR professionals around productivity. Majors says it forces them to ask questions like, “What are you doing all day long with your time, since you’ve turned in a document that should have taken you quite some time to do? Is my money being utilized properly with the use of AI?” Clear norms on when AI use is appropriate, how it should be disclosed, and what human review is required can make productivity gains visible and comparable, reducing suspicion about ‘unused’ time.

One of the biggest issues in AI adoption is that “there really is no governance in place,” Majors says bluntly. For HR departments handling sensitive employee information, that absence of formal rules creates both blind spots and liability. She believes the solution starts with clear guardrails and internal policies, a foundational step in any responsible AI in HR strategy.

The data danger zone: Governance becomes especially urgent in cases of misconduct. “If I put ‘John Smith’ and a harassment investigation into a chat tool, where does it go?” Majors asks. “Who has access to it?” One practical mitigation is anonymization. “If you’re going to use AI, then do not use names… ‘Employee A,’ ‘Employee B.'” But even that safeguard has limits. Formal investigations require names, documentation, and defensible decisions. In high-stakes moments, abstraction gives way to accountability and reinforces Majors’ core point that AI can assist, but an accountable human must remain in the loop.

The AI conversation can create friction between employers and leadership. Workers tasked with training AI systems may worry they are automating parts of their own jobs, while HR leaders must weigh efficiency gains against legal exposure, compliance gaps, and the reputational risks of over-automation. In some cases, companies are already confronting costly discrepancies created by relying too heavily on automated outputs without sufficient oversight. Navigating this shift, Majors argues, ultimately comes down to a disciplined change management where leadership builds trust through transparency and fairness. She believes this tension will prompt a recalibration instead of a retreat from AI, resulting in a more measured integration of it into HR workflows.

“You’re going to need to have those employees back to make sure that the information the AI is giving is accurate,” she says. “Without the employees, no one knows if the information is accurate.” For HR leaders, the mandate is balance: keep humans at the core, and let technology simply serve as a support.